Ban it? Tax it? Humans have been hounded by these questions for millennia.

January 19, 2024

Ohio’s new marijuana law marks a watershed moment in the decriminalization of cannabis: more than half of Americans now live in places where recreational marijuana is legal. It is a profound shift, but only the latest twist in the long and winding saga of society’s relationship with pot.

Humans first domesticated cannabis sativa around 12,000 years ago in Central and East Asia as hemp, mostly for rope and other textiles. Later, some adventurous forebears found more interesting uses. In 2008, archaeologists in northwestern China discovered almost 800 grams of dried cannabis containing high levels of THC, the psychoactive ingredient in marijuana, among the burial items of a seventh century B.C. shaman.

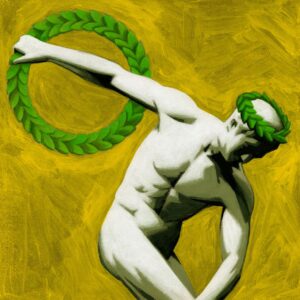

The Greeks and Romans used cannabis for hemp, medicine and possibly religious purposes, but the plant was never as pervasive in the classical world as it was in ancient India. Cannabis indica, the sacred plant of the god Shiva, was revered for its ability to relieve physical suffering and bring spiritual enlightenment to the holy.

Cannabis gradually spread across the Middle East in the form of hashish, which is smoked or eaten. The first drug laws were enacted by Islamic rulers who feared their subjects wanted to do little else. King al-Zahir Babar in Egypt banned hashish cultivation and consumption in 1266. When that failed, a successor tried taxing hashish instead in 1279. This filled local coffers, but consumption levels soared and the ban was restored.

The march of cannabis continued unabated across the old and new worlds, apparently reaching Stratford-upon-Avon by the 16th century. Fragments of some 400-year-old tobacco pipes excavated from Shakespeare’s garden were found to contain cannabis residue. If not the Bard, at least someone in the household was having a good time.

By the 1600s American colonies were cultivating hemp for the shipping trade, using its fibers for rigs and sails. George Washington and Thomas Jefferson grew cannabis on their Virginia plantations, seemingly unaware of its intoxicating properties.

Veterans of Napoleon’s Egypt campaign brought hashish to France in the early 1800s, where efforts to ban the habit may have enhanced its popularity. Members of the Club des Hashischins, which included Charles Baudelaire, Honoré de Balzac, Alexander Dumas and Victor Hugo, would meet to compare notes on their respective highs.

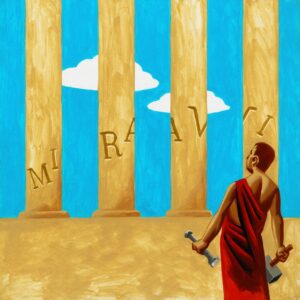

ILLUSTRATION: THOMAS FUCHS

Although Queen Victoria’s own physician advocated using cannabis to relieve childbirth and menstrual pains, British lawmakers swung back and forth over whether to tax or ban its cultivation in India.

In the U.S., however, Americans lumped cannabis with the opioid epidemic that followed the Civil War. Early 20th-century politicians further stigmatized the drug by associating it with Black people and Latino immigrants. Congress outlawed nonmedicinal cannabis in 1937, a year after the movie “Reefer Madness” portrayed pot as a corrupting influence on white teenagers.

American views of cannabis have changed since President Nixon declared an all-out War on Drugs more than 50 years ago, yet federal law still classifies the drug alongside heroin. As lawmakers struggle to catch up with the zeitgeist, two things remain certain: Governments are often out of touch with their citizens, and what people want isn’t always what’s good for them.