Just be thankful that your teeth aren’t drilled with a flint or numbed with cocaine

November 3, 2022

Since the start of the pandemic, a number of studies have uncovered a surprising link: The presence of gum disease, the first sign often being bloody gums when brushing, can make a patient with Covid three times more likely to be admitted to the ICU with complications. Putting off that visit to the dental hygienist may not be such a good idea just now.

Many of us have unpleasant memories of visits to the dentist, but caring for our teeth has come a very long way over the millennia. What our ancestors endured is incredible. In 2006 an Italian-led team of evolutionary anthropologists working in Balochistan, in southwestern Pakistan, found several 9,000-year-old skeletons whose infected teeth had been drilled using a pointed flint tool. Attempts at re-creating the process found that the patients would have had to sit still for up to a minute. Poppies grow in the region, so there may have been some local expertise in using them for anesthesia.

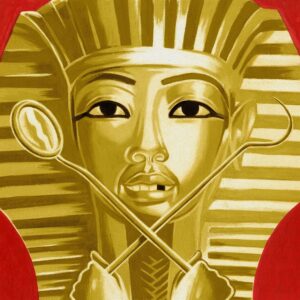

Acupuncture may have been used to treat tooth pain in ancient China, but the chronology remains uncertain. In ancient Egypt, dentistry was considered a distinct medical skill, but the Egyptians still had some of the worst teeth in the ancient world, mainly from chewing on food adulterated with grit and sand. They were averse to dental surgery, relying instead on topical pain remedies such as amulets, mouthwashes and even pastes made from dead mice. Faster relief could be obtained in Rome, where tooth extraction—and dentures—were widely available

ILLUSTRATION: THOMAS FUCHS

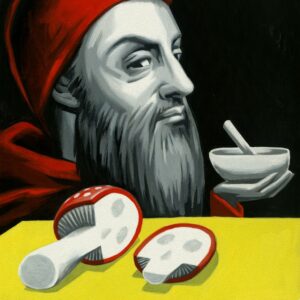

In Europe during the Middle Ages, dentistry fell under the purview of barbers. They could pull teeth but little else. The misery of mouth pain continued unabated until the early 18th century, when the French physician Pierre Fauchard became the first doctor to specialize in teeth. A rigorous scientist, Fauchard helped to found modern dentistry by recording his innovative methods and discoveries in a two-volume work, “The Surgeon Dentist.”

Fauchard elevated dentistry to a serious profession on both sides of the Atlantic. Before he became famous for his midnight ride, Paul Revere earned a respectable living making dentures. George Washington’s false teeth were actually a marvel of colonial-era technology, combining human teeth with elephant and walrus ivory to create a realistic look.

During the 1800s, the U.S. led the world in dental medicine, not only as an academic discipline but in the standardization of the practice, from the use of automatic drills to the dentist’s chair.

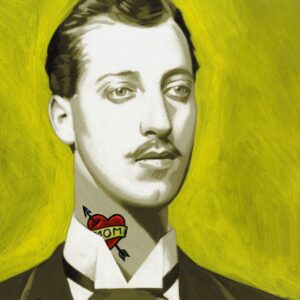

Perhaps the biggest breakthrough was the invention of local anesthetic in 1884. In July of that year, Sigmund Freud published a paper in Vienna on the potential uses of cocaine. Four months later in America, Richard Hall and William S. Halsted, whose pioneering work included the first radical mastectomy for breast cancer, decided to test cocaine’s numbing properties by injecting it into their dental nerves. Hall had an infected incisor filled without feeling a thing.

For patients, the experiment was a miracle. For Hall and Halsted, it was a disaster; both became cocaine addicts. Fortunately, dental surgery would be made safe by the invention of non-habit forming Novocain 20 years later.

With orthodontics, veneers, implants and teeth whiteners, dentists can give anyone a beautiful smile nowadays. But the main thing is that oral care doesn’t have to hurt, and it could just save your life. So make that appointment.