The 20th-century surgeon Frederic Mohs made a key breakthrough in treating a disease first described in ancient Greece.

June 30, 2022

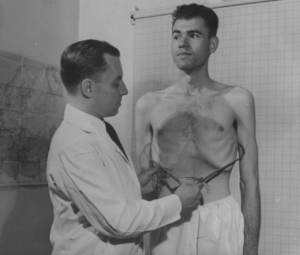

July 1 marks the 20th anniversary of the death of Dr. Frederic Mohs, the Wisconsin surgeon who revolutionized the treatment of skin cancer, the most common form of cancer in the U.S. Before Mohs achieved his breakthrough in 1936, the best available treatment was drastic surgery without even the certainty of a cure.

Skin cancer is by no means a new illness or confined to one part of the world; paleopathologists have found evidence of it in the skeletons of 2,400- year-old Peruvian mummies. But it wasn’t recognized as a distinct cancer by ancient physicians. Hippocrates in the 5th century B.C. came the closest, noting the existence of deadly “black tumors (melas oma) with metastasis.” He was almost certainly describing malignant melanoma, a skin cancer that spreads quickly, as opposed to the other two main types, basal cell and squamous cell carcinoma.

ILLUSTRATION: THOMAS FUCHS

After Hippocrates, nearly 2,000 years elapsed before earnest discussions about black metastasizing tumors began to appear in medical writings. The first surgical removal of a melanoma took place in London in 1787. The surgeon involved, a Scotsman named John Hunter, was mystified by the large squishy thing he had removed from his patient’s jaw, calling it a “cancerous fungus excrescence.”

The “fungoid disease,” as some referred to skin cancer, yielded up its secrets by slow degrees. In 1806 René Laënnec, the inventor of the stethoscope, published a paper in France on the metastatic properties of “La Melanose.” Two decades later, Arthur Jacob in Ireland identified basal cell carcinoma, which was initially referred to as “rodent ulcer” because the ragged edges of the tumors looked as though they had been gnawed by a mouse.

By the beginning of the 20th century, doctors had become increasingly adept at identifying skin cancers in animals as well as humans, making the lack of treatment options all the more frustrating. In 1933, Mohs was a 23-year-old medical student assisting on cancer research in rats when he noticed the destructive effect of zinc chloride on malignant tissue. Excited by its potential, within three years he had developed a zinc chloride paste and a technique for using it on cancerous lesions.

He initially described it as “chemosurgery” since the cancer was removed layer by layer. The results for his patients, all of whom were either inmates of the local prison or the mental health hospital, were astounding. Even so, his method was so novel that the Dane County Medical Association in Wisconsin accused him of quackery and tried to revoke his medical license.

Mohs continued to encounter stiff resistance until the early 1940s, when the Quislings, a prominent Wisconsin family, turned to him out of sheer desperation. Their son, Abe, had a lemon-sized tumor on his neck which other doctors had declared to be inoperable and fatal. His recovery silenced Mohs’s critics, although the doubters remained an obstacle for several more decades. Nowadays, a modern version of ”Mohs surgery,” using a scalpel instead of a paste, is the gold standard for treating many forms of skin cancer.